Efficiently and effectively scaling up language model pretraining for best language representation model on GLUE and SuperGLUE - Microsoft Research

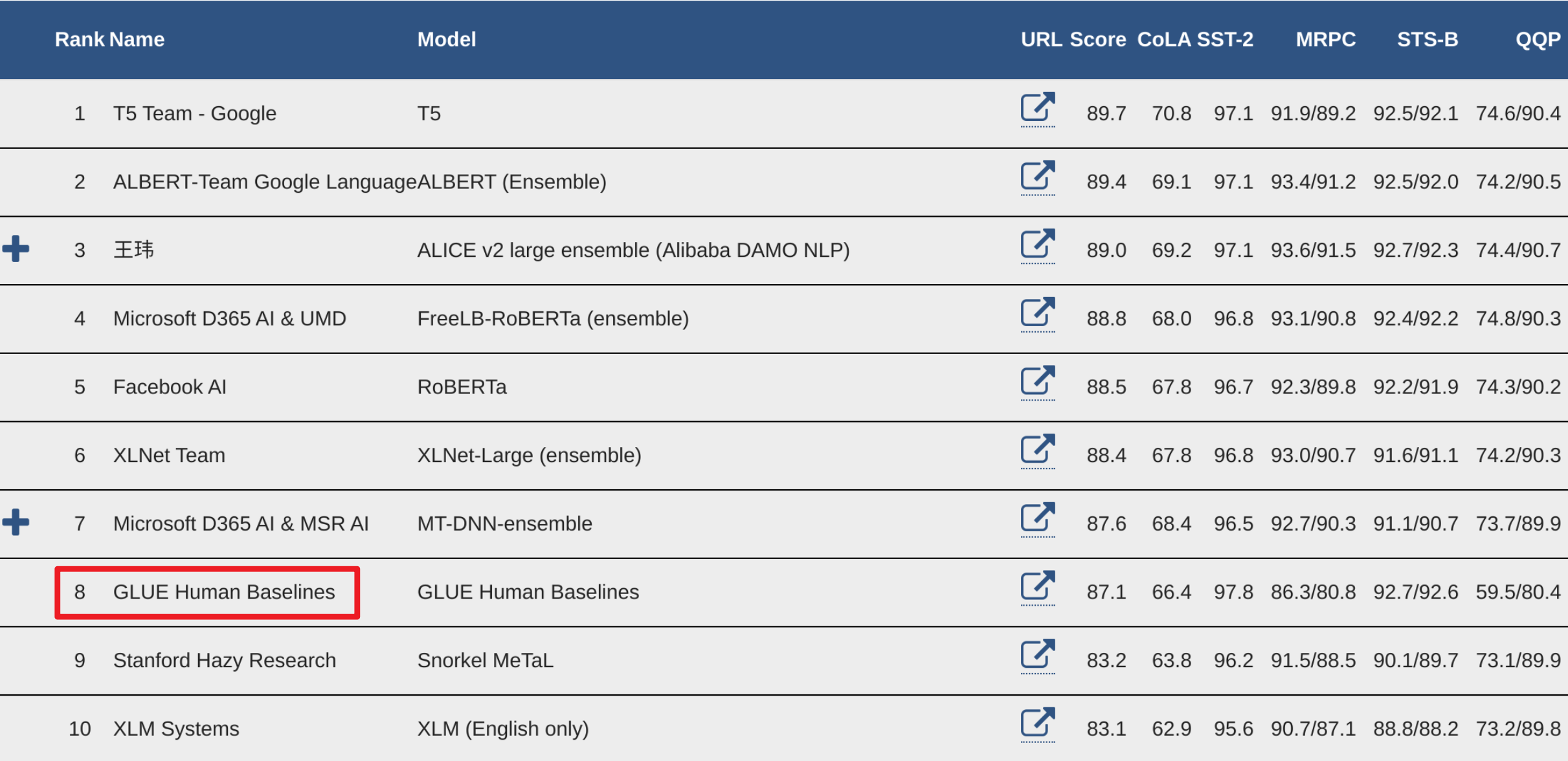

Leaderboard test results of experiments on GLUE tasks. The score for... | Download Scientific Diagram

GLUE test results returned by the GLUE leaderboard. The first two rows... | Download Scientific Diagram

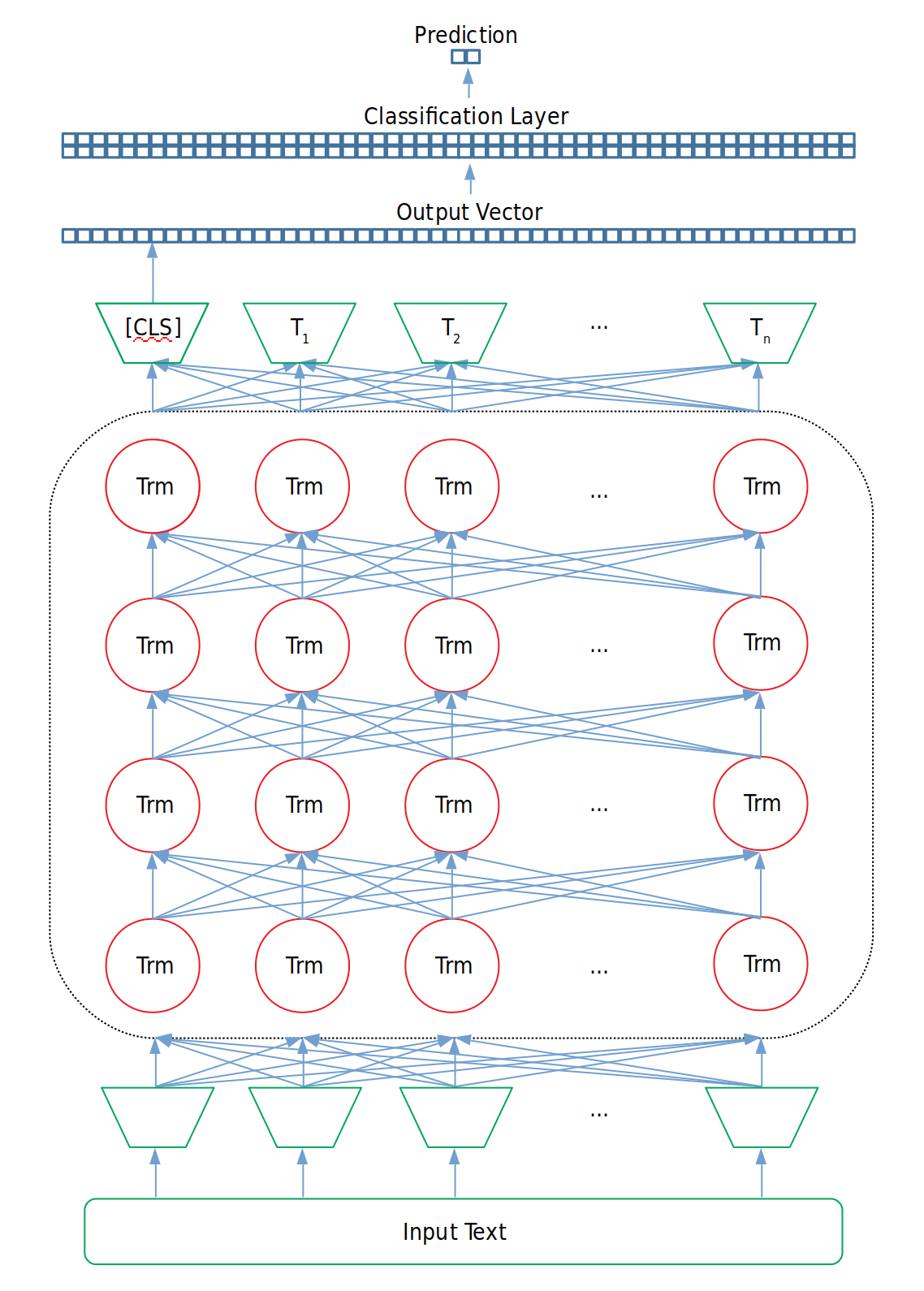

![papers] RoBERTa: A Robustly Optimized BERT Pretraining Approach | by Grigory Sapunov | Intento papers] RoBERTa: A Robustly Optimized BERT Pretraining Approach | by Grigory Sapunov | Intento](https://miro.medium.com/max/1400/1*gS8J7jm8gMTIeaowyQpVWQ.png)